Service Level Objectives and symptom-based alerting, part 1

If you follow application monitoring and the numerous monitoring tools vying for developer attention, you've probably noticed that everyone seems to be talking about service level objectives (or SLOs) all of a sudden. There are plenty of resources on the web defining what SLOs are – if you haven't seen them, I'll inlcude some links at the end of this post. Instead, here I'd like to explain why the ideas behind SLOs are relevant to any application monitoring project.

Let's start with a war story. Back at a past job, I worked on a cloud-based application that was essentially a data pipeline. Data from our customers came in, ran through a series of processing steps, and time series data came out the other end. The company, and our team, were growing rapidly. All of this growth naturally meant more people relied on this application every day and my team was eager to prove that we could keep evolving the code rapdily while preventing outages.

We had our work cut out for us, though. The intermediate stages in our pipeline were set up like polling queue consumers: they would periodically wake up, see if the previous stage had some data ready for them, do their calculations, and then write the results for the next stage to pick up. What could go wrong? A lot of things, it turned out! Bugs could cause calculations to fail and bail out before the results could be written, and memory exhaustion could cause similar behavior. The data itself was time-sensitive, so too much latency – whether in the application itself or the cloud services that it depended on – could also mean an unacceptable slow down and effective data loss.

We had been using this architecture for a few years at this point, so we had monitoring in place to catch all these problems. We'd instrumented our application code, so if something threw an exception while processing data, we'd know about it. That same instrumentation measured latency and alerted us if the system was in danger of getting too slow. In some cases, we backed up the instrumentation with log-based and resource-based alerting, so even if our application processes got bogged down under heap pressure and failed to report these problems, we'd still be able to respond quickly.

One evening, after most of the team had gone home for the day, some overeager automation restarted all of the application processes responsible for one stage of the pipeline. While this was not a common occurrence, the application was designed to handle such events with minimal disruption. In the worst case, we'd expect a small gap in the time series data coming out the end of the pipeline. Our monitoring reported no problems, so surely everything was fine.

A few hours later though – when the customer support team reached out to our on call engineer to report a deluge of customer complaints – we discovered to our horror that the pipeline had in fact been broken since the series of restarts. How could this have happened? Even more importantly, why didn't our monitoring catch it? After all, we had invested significant engineering time in detecting problems and quickly remediating them. How did our monitoring let us down?

After reviewing the monitoring data, we knew that while the restarted processes were running, they were in fact doing no useful work. It was as if the periodic scheduled task they used for pulling data from the previous pipeline stage simply never ran. It was in this particular situation, and this sort of failure mode, where the approach we had taken towards monitoring failed. We had designed our alerts to look for problems: failed tasks, latency spikes, and resource saturation. In other words, we were alerting on the causes of problems. The trouble with that approach is that unless you can correctly enumerate every possible cause of an outage, you have a gap in your monitoring. We had missed a type of failure where our code simply exited early, without throwing any errors. In a complex system, it's practically impossible to anticipate and reliably alert on every possible failure mode.

If I were to work on a system like this again, I'd take a different approach. Rather than alert on causes, I'd alert on symptoms. In other words, if there's a problem in the application, think about how it could manifest to the user. For some applications, this might involve only looking at a few metrics. A simple web application, for example, might be sufficiently monitored via alerting on request rate, error rate, and latency. But in the case of our data pipeline, had we thought about symptoms, it would have been clear that a sudden drop in the rate of data flowing out one end is a likely symptom of a problem and thus should have been included in our monitoring strategy.

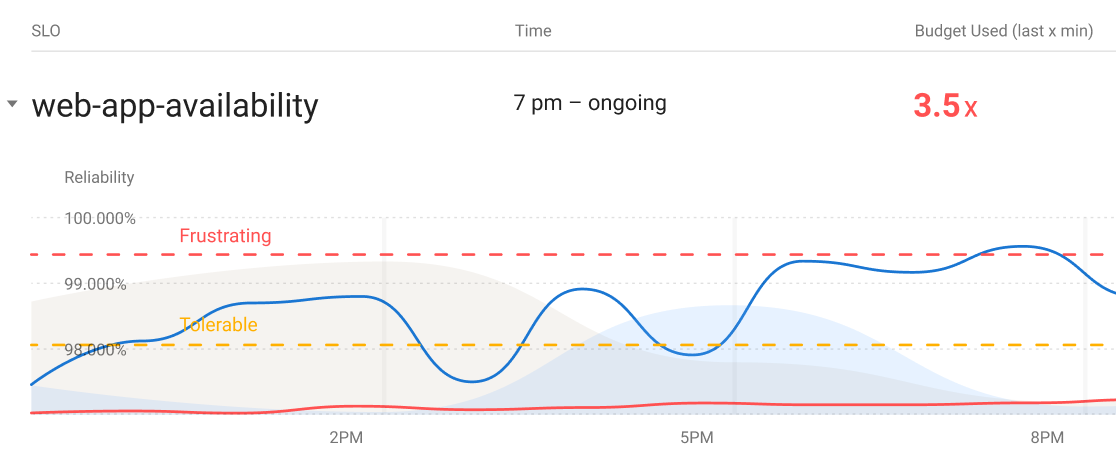

In my next post in this series, I'll dig into how you can implement a symptom-based monitoring strategy with SLOs. Part of the reason that symptom-based alerting is so powerful though is that you can use this strategy regardless of whether you are using SLOs. Take a look at your own monitoring setup and ask yourself: am I alerting on causes or symptoms?

If you've made it this far, you might be wondering why simply restarting an application process caused it to stop doing the work it was supposed to be doing once it came back up. This was an intentional "feature" of the application, that was used by our deployment orchestration to control when nodes came into service. Sadly, we had changed how we did deployments and no longer used this feature, so it was a vestige of how things used to work before the system matured. We had talked about removing it but this work hadn't seemed urgent as this detail had never caused problems, until of course, it did. There's a lesson in managing incidental complexity here too!

Finally, here are some good resources for learning more about SLOs, and their friends like SLIs and error budgets: